Applying Analytics to Cybersecurity

In Outside the Closed World: On Using Machine Learning for Network Intrusion Detection the authors write: "In network intrusion detection research, one popular strategy for finding attacks is monitoring a network's activity for anomalies: deviations from profiles of normality previously learned from benign traffic, typically identified using tools borrowed from the machine learning community. However, despite extensive academic research one finds a striking gap in terms of actual deployments of such systems: compared with other intrusion detection approaches, machine learning is rarely employed in operational "real world" settings."

This paragraph points out the problem of using intrusion detection, anti-malware, anti-spam, and firewalls by themselves to protect one’s infrastructure “despite extensive academic research” and given the availability of machine language tools.

Consider the typical status quo approach to log monitoring. When a system reports that someone has logged in 5 failed times, that information does not by itself show someone trying to brute force a login. Machine learning can use classification models to determine what kind of person that is and model their normal behavior. This could be someone who is a regular user or even a super user who just types mistakes.

Machine language is statistics, mathematics, and algorithms. Much of it difficult to understand. But people who do understand that math have written those down as coded algorithms so that other people can use them. There are ML APIs for Apache Spark, Python, and other languages. This lets someone plug in data without always having to understand the math behind it. However it does require math knowledge to yield a basic understanding of the how the algorithm works so that the person looking at the results does not draw a wrong conclusion. To understand this models requires some knowledge of linear algebra, series and sequences, matrices, statistics, and regular algebra. People who specialize in machine learning are called data scientists. But those tend to be PhD university researchers. It would suffice just to have programmers who can read their academic papers and absorb them.

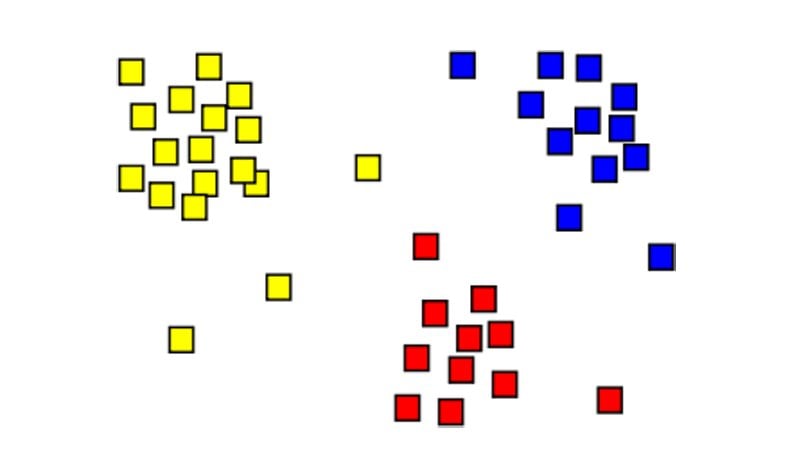

What machine language brings to cybersecurity is the ability to look at events in their entirety versus isolation and to find patterns and correlations between data points. Correlation means finding relations between variables, like time of the year (e.g. Black Friday) versus web traffic. Classification models find patterns which can then be classify behaviors as normal or abnormal without having to just rely on thresholds (e.g., 5 failed logins), which are really no better than guessing.

Big data technology now lets us save far more information that we would have in the past, because they use distributed architectures across commodity, low-cost PCs that power the cloud. So we can stream router and every other bit of data and store it without concern for space. For example, a popular log monitoring system is logstash, ElasticSearch, and Kibana (called ELK). It is popular because it can scale without limits across a cluster of commodity machines and log data from every machine in your organization, not run out of space, and not even slow down. ELK by itself does not have machine learning, But you can add a plugin so that log data flowing into ELK appears as an RDD, which is an Apache Spark data structure.

Spark supports command line shells in Scala, Python, and R. So a programmer or data scientist can walk through the data creating subsets and filtering items to focus on a particular metric or metrics. Then they fit the data into the format needed by the machine language model, usually a matrix in n dimensions. That is how they take an idea from conception to implementation. For example, you could keep track how many times each single user on the network executes a program. It might look like this:

| user id | User type | .dll or .exe | Times Executed |

| 1 | admin | Some.dll | 17 |

| 2 | regular | Same.dll | 6 |

A regular logging system might look at the statistical average and standard deviation of how many times this program is running. That is basic statistics and not machine learning. The system then sends out an alert which an analyst has to manually look at. That is what, for example, ArcSight SIEM does. But this just creates a signal-to-noise problem. That is when the security team cannot see what is important because there is so much data on the screen, the vast majority of which is trivial.

Machine learning takes data points like this and, for example, makes observations that humans cannot readily see. For example it can observe how these userids and events cluster around certain data points. Then given the training data set (taken from actual log events) the algorithm can flag alerts based on new live data, but using on a much more sophisticated model that mimics a series of deductions such as a person would make.

What indicators should one look at? One idea as we just said is the frequency distribution of program execution. Another is how many bytes they are sending out from their machine. If the lady in accounting who does nothing but accounting has a spike in her traffic then her machine could be stealing and then using sftp to send out stolen data to a hacker’s command and control center.

From here you are advised to do continued reading. Good sources for how to apply machine learning to cybersecurity are the book mentioned above and these articles on KDNuggets. These are complicated. You will probably have to code your own ML logic to analyze logs as the market is woefully short of products that do that. Or if they use some ML they do not explain it. How can you trust what a model is telling if you do not know the logic upon which it is based?

Graphics Source: WikiPedia

Share:

Karolis Liucveikis

Experienced software engineer, passionate about behavioral analysis of malicious apps

Author and general operator of PCrisk's News and Removal Guides section. Co-researcher working alongside Tomas to discover the latest threats and global trends in the cyber security world. Karolis has experience of over 8 years working in this branch. He attended Kaunas University of Technology and graduated with a degree in Software Development in 2017. Extremely passionate about technical aspects and behavior of various malicious applications.

PCrisk security portal is brought by a company RCS LT.

Joined forces of security researchers help educate computer users about the latest online security threats. More information about the company RCS LT.

Our malware removal guides are free. However, if you want to support us you can send us a donation.

DonatePCrisk security portal is brought by a company RCS LT.

Joined forces of security researchers help educate computer users about the latest online security threats. More information about the company RCS LT.

Our malware removal guides are free. However, if you want to support us you can send us a donation.

Donate

▼ Show Discussion